This website includes both the Ethical Choices with Educational Technology for Artificial Intelligence Principles and Questions created by Scott Warren, Kimberly Grotewold, and Dennis Beck (Copyright 2024). Please click the button below to start with the principles guiding ECET AI.

ECET AI 2024

Ethical Choices with Educational Technology for Artificial Intelligence

This site provides information about the ECET AI decision-support framework meant to help educators regarding whether to adopt a particular AI-supported technology.On this site are the ECET AI principles and guiding questions as well as detailed information about each.

ECET AI Principles

Grounded in International and National Guidelines

ECET AI Principles

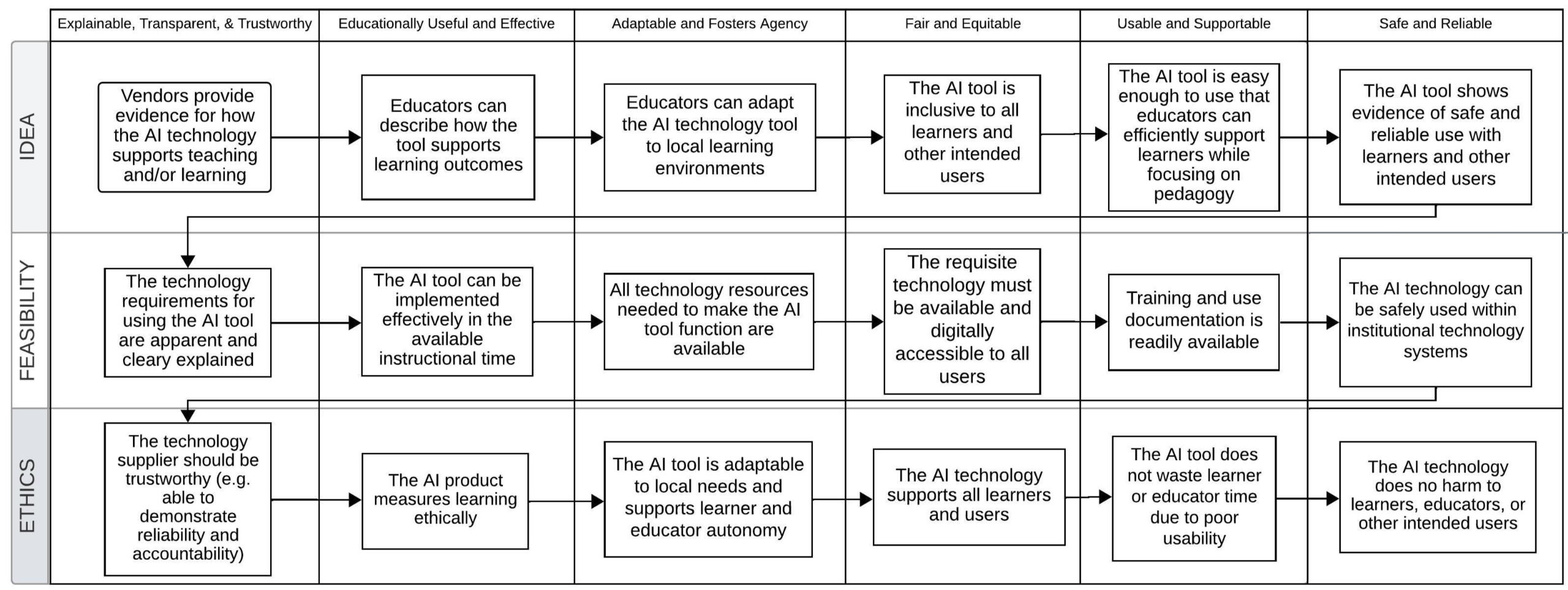

The image above includes the ECET AI principles that guide the questions that instructors may ask to determine whether or not to choose an AI tool. They are broken into whether the Idea is appropriate to the AI tool, whether the AI tool and idea feasibility to implement can work in your situation, and whether it meets guidance on the Ethics related AI use.These principles are grounded in well-researched guidelines offered by UNESCO, the European Union, US Departments of Education and Defense, and the Software and Information Industry Association. Please contact [email protected] if you would like more information about those sources.Click the next button to review the ECET AI decision-making questions.

ECET AI GUIDING QUESTIONS

Is it Ethical to Use this AI Tool?

ECET AI Guiding Questions

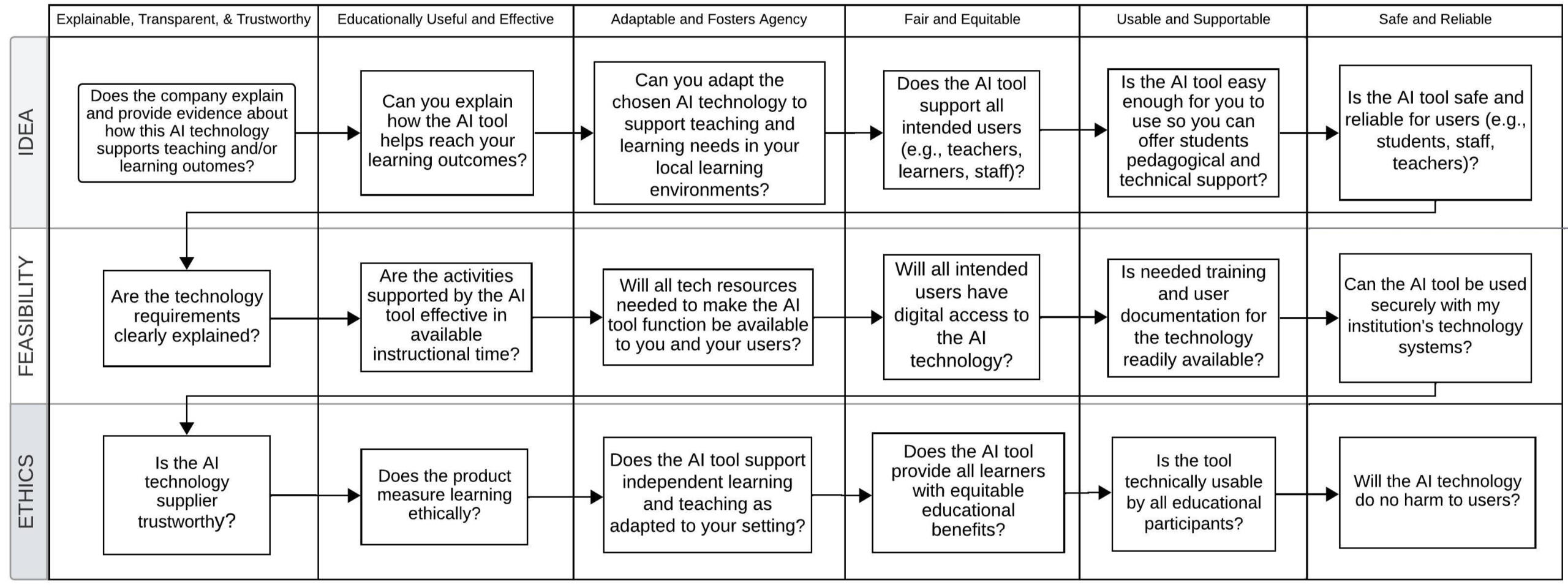

The guiding questions for ECET AI are presented in the image above. Each question aligns with the principles listed above and are meant to help instructors in higher education, K-12, and corporate settings determine whether a particular AI tool under consideration is appropriate for use in their setting. The questions are meant to help support your thinking about the tool without being overly directive to ensure any decision you make is appropriate to your situation.Please click the button below if you would like more detailed information about each guiding principle and question.

ECET AI Principles and Questions Details

Idea Development Principles and QuestionsIdea Principle 1: Vendors Should Explain with Evidence How a Technology Supports Educational OutcomesThe question related to this principle is: Does the company explain and provide evidence about how this AI technology supports teaching and/or learning? Underpinning this question is the ethical assertion that any vendor selling a product intended to support students should be able to provide details about how and why learning is expected to occur. The technology’s functions and impacts on learners should be explained in plain language across all materials provided by the company. This result should be more than students “looking engaged” due to the novelty effect (Jeno et al., 2019; Sung et al., 2009) Research evidence showing that product usage results in positive impacts according to the product’s stated promises helps support the idea that a technology vendor is trustworthy and that purchasing the product will be worth the expense.Idea Principle 2: Instructors Can Describe How the Tool Supports Their Learning OutcomesThe question asked to explore this principle is: Can you determine and explain how the AI tool helps reach your learning goals? The pedagogical reasoning behind the principle is that instructors should be able to determine and define how they intend to use any AI product to support specific learning goals and related activities. This could occur by examining how the company describes the use of the tool for specific learning objectives and matching it to what the instructor hopes to achieve.Idea Principle 3: Instructors can Adapt the Technology to Local Learning EnvironmentsThe question asked of the instructor in support of this principle is: Can you adapt the chosen AI technology to support teaching needs in your local learning environments? A teacher should be able to examine their local learning environment (e.g., classroom, lab, etc.) to determine whether the technology can be successfully implemented with the available resources. To have agency over the application, the educator may want or need to customize it for learners’ usability.Idea Principle 4: AI Tool is Inclusive of All StudentsThe use of any AI tool in an educational environment should be inclusive of all students who may need it. To address this principle, the related ECET AI question is: Does the AI tool support all students who need to use it in this lesson? Answering this question necessitates the educator’s understanding of their learners’ sociocultural characteristics, academic and technical proficiency levels, as well as any ADA support needs that must be met.Idea Principle 5: Tool is Easy Enough to Allow Educator to Support StudentsTo address this principle, the related ECET question is: Is the AI tool easy enough for you to use so that you can offer students pedagogical and technical support? The reasoning for this principle is that if an AI tool is not easy enough for the instructor to use, the tool is unlikely to provide them with sufficient time to meet pedagogical goals or support students through their own technological challenges. Making this determination will require more than the teacher trying out a few features of the AI tool – they must use it in the specific manner that they intend for their students. Purchasing technology before determining its real utility can be harmful if the technology is later found to be ill-suited to the learners and the setting. Many schools have a room filled with clickers or other technology not usable by teachers or students due to a mismatch between expected utility and actual usefulness.Idea Principle 6: AI Tool Shows Evidence of Safe and Reliable Service DeliveryThe question for instructors in this principle is: Is the AI tool safe and reliable for use with my students? This question asks instructors to evaluate whether there is evidence that the tool can be safely used in their local educational setting and with their students. Doing so will require working with local IT support and administration to evaluate vendor statements regarding the safety and reliability of a potential AI tool to determine whether it can successfully be implemented without compromising student data, privacy, or security.Feasibility Principles and QuestionsFeasibility Principle 1: The technology requirements are clearly explainedThe question for instructors in this principle is: Are the technology requirements clearly explained?

Answering this question evaluating materials provided by the vendor online regarding what technologies are needed to implement the technology successfully. For example, smart white boards that do not have integrated LCD projectors may need a separate unit to function. When running generative AI locally with Apple’s new product, users will require iPhone 15 or above. For AI-supported VR, a high-end gaming computer can be needed with some products.Feasibility Principle 2: AI Tool Benefits Must Result Within Available Work Time as Promised by VendorIn any classroom or training situation, ensuring learning takes place within the time allotted is necessary. When employing a learning technology such as AI as an educational treatment, it can be difficulty to determine how long students must use it in order to achieve promised benefits. It is therefore important to for vendors to explain and show evidence that using the AI tool can achieve learning outcomes within a certain number of periods of active use with some variability. If not, this tool may not be the right one to use.Feasibility Principle 3: All Resources Needed to Make the Technology Function Should be AvailableWill all resources needed to make the AI tool fully functional be available to you and your students? is the question instructors should ask in support of this principle. Many educational technologies, such as virtual reality, require additional hardware or software. Therefore, if other technologies such as persistent internet support are needed to access the AI-based tool, they must be available to all involved users. Other technologies may include a high-powered laptop/desktop or proprietary hardware (e.g., Meta Quest) to use AI-supported virtual reality goggles. In other cases, instructors may need access to the Steam gaming portal to download software to local machines for the duration of the use of the tool’s usage. With some AI tools, tokens must be purchased to make them usable, so funding is another important resource.Feasibility Principle 4: Technology must be Available and Digitally Accessible to All UsersFor this item, which focuses on AI technology access, the related question is Will all students have digital access to the AI technology? Whereas the previous principle focused on institutional availability of the tool, this one emphasizes access by all students in a setting. This principle includes support for those learners with visual, auditory, or other impairments, in keeping with state and federal law. Instructors can design approaches that overcome this limitation by having students work in supportive dyads, but this may not be an appropriate solution in all cases. Further, appropriate bandwidth and number of devices must be available to students when activities require access to the AI tool.Feasibility Principle 5: Training and Use Documentation is AvailableUnderpinning these questions is the idea that, when evaluating a potential AI tool, instructors should be able to determine whether they or their students need training to use the AI technology. Making this determination sometimes necessitates clarification beyond what is stated on vendor websites. Figuring out how much training is required and how long it will take is essential to ensure time is available to implement the technology successfully. Ascertaining the availability of the training (i.e., technology-delivered, or human-provided), before tool implementation and allotting the necessary training time is critical for success. If no training is available, is there appropriate user documentation? For this principle, we assert that the technology should be easy enough for students to use so that educators will not spend most of their time engaging in just-in-time training or student technology assistance. The instructor’s role is to provide pedagogical support and answer questions about the learning activities, not spend time making sure students can use the tool,.Feasibility Principle 6: AI Technology Can be Safely Used with Institutional Technology SystemsFor this principle, the associated question is: Can the AI tool be used securely with my institution’s technology systems? Underlying this principle is the need to verify that the institution is able to provide the AI-based tool safely and reliably with the systems available. The tool must be accessible through any firewall and not violate acceptable use policies. It must also show evidence that the vendor takes cybersecurity seriously and protects the institution’s digital systems. Many educational technology implementations fail in classroom or training environments because instructors do not thoroughly evaluate whether the technology applications will interface properly and safely with existing systems. Once all six feasibility questions are complete, the final stage is to evaluate the ethics of using the AI tool.Ethics Principles and QuestionsEthics Principle 1: Technology Supplier Should Be TrustworthyNext, instructors should ask, Is the AI technology supplier trustworthy? Underlying this question are the ideas that students and instructors should retain control of their data and that student data collection should support instructional differentiation and not be used primarily for vendor profit. Public news sources regularly report poor ethical behavior on the part of technology companies. Sometimes, these negative behaviors include selling students' or instructors' data without permission or presenting harmful advertisements or misinformation. Determining whether an AI tool supplier is trustworthy is crucial because it helps teachers protect their students from potential harm. Instructors should read as much as possible about how a company they are considering working with treats user data and whether students retain ownership of their information and assignment outputs.Ethics Principle 2: Product measures learning ethicallyThe next question in ECET AI asks the instructor, Can learning supported by the AI tool be measured ethically? This question is needed because some AI-based tools measure student performance without transparency about processes or decision-making, including how the outcomes impact student learning. Any ethical use of AI-based tools to teach or evaluate learning must clearly explain to instructors and students when, why, and how, student data and AI processes are used. With some products, it is unclear whether learning is being measured ethically and transparently because the tool employs unsupervised and/or deep learning approaches. Such AI methods are often called out as “black-boxes,” where it is not possible to know how the AI generated its findings (Prinsloo, 2020). In other cases, there may be inherent biases in the training data or algorithm constructions that can disadvantage different student groups or lead to discriminatory outcomes (Fiok et al., 2022).Ethics Principle 3: Tool is Adaptable to Local Needs and Supports User AutonomyWhatever AI tool is considered, it should adapt to the local educational environment to support independent student learning. Therefore, it is important to ask, Does the AI tool support independent learning as adapted to this setting? Evaluating this principle will require instructors to investigate how the AI tool is expected to work and whether it supports independent student learning activities within their local school environment and culture. If a tool is adaptable, then the educator can exercise agency and adjust it to reflect and meet the needs of their student demographic more effectively.Ethics Principle 4: Technology Should Support All UsersWhatever educational technology an instructor adopts should support all learners in the classroom, and many do not. Therefore, it is important to ask, Does the AI tool support learners with disabilities? Any AI technology used in the classroom should be available to all learners, regardless of disability status. However, some vendors fail to support learners with visual, auditory, or other impairments, making these AI-based tools unusable by a subset of a classroom. Some AI tools are beneficial to learners with disabilities by providing audio descriptions, automatic text generation, and other affordances. However, some tools will be completely unusable for students with disabilities because they are fully text-, image-, or audio-based.Ethics Principle 5: Tool Does not Waste Instructional Time Due to Poor UsabilityFor this question, we ask instructors to consider Is the AI tool usable by all learners? As technology tools including AI become ever more complex, the likelihood that a product may not be usable by one or more learners increases. Therefore, it is important to determine whether the tool is technically and practically usable by students for the intended learning outcomes prior to adoptionEthics Principle 6: Technology Should Do No Harm to UsersThe final question in the ethics set asks instructors to consider, Will the AI technology do no harm to students? This is a fundamental ethical principle to govern instructor decision-making. It stipulates that an AI tool should not, under any pre-determinable circumstances, a.) harm students’ physical or mental health or b.) damage student learning by teaching poor mental models, producing incorrect information, or failing to teach what the tool is intended to provide. In the first case, a few tools, such as VR goggles, can make some students sick (Chang et al., 2020), while other games used for learning have been shown to lead to psychological addiction (Small et al., 2020) and so might be avoided. In the case of an AI technology presenting invalid information, introducing, or reinforcing malformed thought processes, or undermining understanding, then reteaching will be necessary. Re-teaching, when it is not implemented to reinforce or extend existing understanding, can be categorized as a waste of instructional time when that time is scarce. In less severe cases, the AI tool’s design may cause some loss of instructional time that can be made up without reteaching. To help determine the potential risk of harm to student learning and wellbeing, instructors should investigate unfiltered reviews and reports from other educators and academic personnel about their experiences with a tool. Additional concerns to be considered include negative cognitive impacts on students memory and attention if the AI is taking on useful, germane cognitive load (Firth et al., 2020; Small et al., 2020), AI hallucinations providing bad models and sources (Østergaard & Nielbo, 2023; Salvagno et al., 2023), and other problems.Please contact [email protected] to request any cited references.